Tech rants: PC-s use way too much power in 2021

Welcome to 2021. We have:

- supply chain issues

- no reasonably priced GPU-s

- consumer-grade CPU-s with peak power consumption at 296W

- GPU-s that consume 350-400W of power under normal use

At the same time, we have made great leaps in CPU/GPU architectures and chip manufacturing technologies, which should result in faster and more efficient devices, right?

Well, yes. However, with some fierce competition between AMD vs Intel and AMD vs NVIDIA all reason is thrown out the window and the power limits are raised in order to preserve the performance crown. In the end, all that matters is “but my CPU is 5% faster in this benchmark!” or “Oh yeah Intel has the performance crown even if it took 290W to get there”.

This all sounds absurd during a time when it’s clear that energy usage is becoming a big problem in the near future. Using up more power daily also makes it more difficult to rely on renewable (not necessarily green!) energy sources due to the simple fact that building more capacity is more expensive.

All that power, but at what cost?

What is often missing from this conversation is that it’s not only the CPU or the GPU that uses up a lot of energy while running.

When you have a hungry chip in your system, you will likely need to get a bigger cooler in your system to dissipate all that heat. Whoops, more raw materials (aluminium, copper) required.

The GPU is so hot that the manufacturer had to put on a giant heatsink, multiple fans and made the whole darn thing barely fit in your case. Same story.

Something has to deliver all that power as well. And just like that, the required amount of electrical components on the GPU board or the motherboard has just multiplied.

The hot air gets stuck in your PC case? Sounds like someone is going to need to make an investment into some PC fans.

And to finish it all off, you discover that your power supply cuts out during high loads, because 800W power peaks are acceptable now I guess. Time to go to your local PC parts store to get a new power supply.

You might look at your monthly electricity bill and not even notice the power required to run such a machine, but the thing is that the real cost comes from everywhere else. That cost is not low.

Okay, you convinced me, now what?

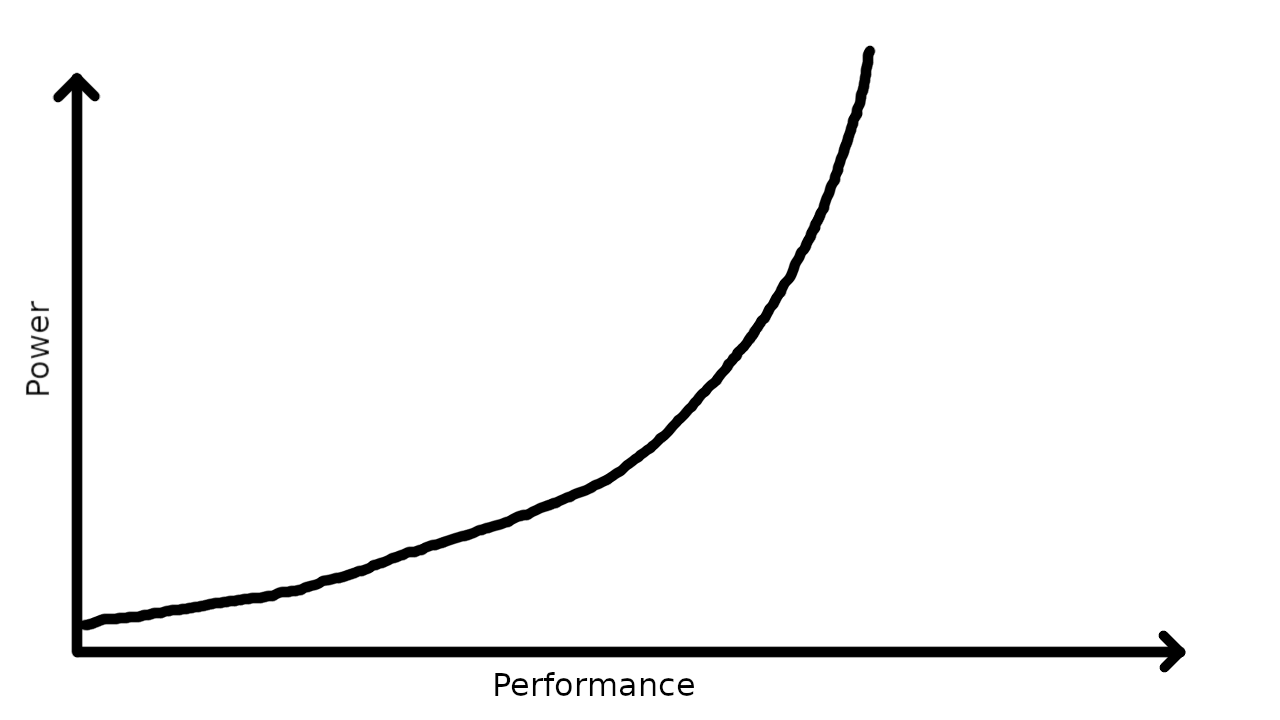

If you already have bought hardware that fits the above description, then there isn’t much to do other than limiting the power usage. CPU-s and GPU-s generally follow this kind of rule:

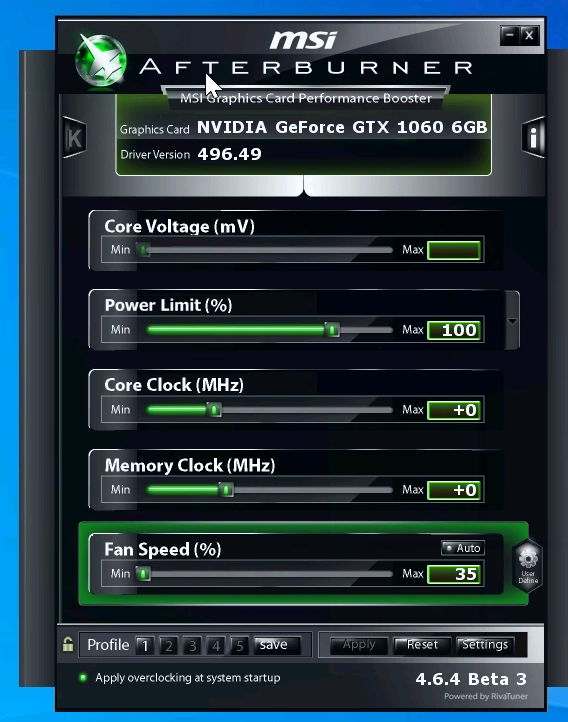

If you use Windows, then one example of making a positive change is to change the power limit of your GPU. For AMD GPU-s, this is present in Radeon settings and is a simple percentage slider. For NVIDIA cards I usually opt for using MSI Afterburner. While doing any changes, definitely have something like FurMark running in the background, that helps measure performance and get readouts for your current power usage and the performance that you get out of it.

A modest reduction of the power limit will likely yield a small drop in performance, but a much bigger drop percentage-wise in the power consumption of the GPU.

For CPU-s, there are two types of options:

- configure power limits in UEFI settings (depends on your CPU and motherboard manufacturer)

- limit the CPU power usage using tools available in the operating system

Here are some examples of the knobs and tools that you can use to achieve this:

- AMD CPU-s and UEFI:

cTDPoption in UEFI settings. Just set the TDP that you want to run the CPU at and you’re done! Allows you to run your 105W TDP CPU at 35W, for example. - AMD Precision Boost Overdrive (PBO) and UEFI. Sometimes the cTDP setting is not present, which means that you’ll have to go to the overclock settings and input the correct parameters to set the same limit.

- AMD and Linux:

cpufreq. Honestly, not the best way to do it since the steps don’t seem to be that granular, but it’s better than nothing. - Intel and UEFI: supported CPU-s likely support similar techniques to AMD, but since I don’t have experience with Intel, I cannot say what exactly you can change.

- Intel and Windows/Linux: disable turbo boost. Peak performance will definitely suffer, but you end up running your

CPU at the base clock, which is likely very close to the efficiency point of the cpu.

- in Linux, use the

intel_pstatedriver. - in Windows, you can set the “Maximum processor state” under the legacy power options UI to 99%, which will disable turbo boost.

- in Linux, use the

This list is not complete and there might be better options out there. Just go with the one that is easiest for you and prefer UEFI-level settings to any OS settings as those will persist even if you change your operating system.

I changed my mind. Why should I care?

Using less power actually comes with a lot of benefits:

- smaller electricity bill (just don’t expect any dramatic changes)

- your PC will run much cooler now, negating the need for a big and wasteful cooling setup

- you can run your PC much quieter now as well, less heat to dissipate -> fans don’t need to run as fast now

- you can pick cheaper (in price, not quality) options when it comes to PC components since you don’t need all that overbuilt capacity.

Why do you care?

Once you limit yourself with the amount of resources available to you, you’ll soon discover that you can do a lot even with a small power budget. I’m running all my self-hosted services, gaming and work off of one decently configured PC that is quiet and yet powerful enough to perform well at any task I throw at it. If you can do all of that with a CPU that was originally designed for laptops and has a rated TDP of 65 watts, then why get anything more powerful?

Apple has joined the game

We were in a Intel-lead stagnation, with new chips adding minor features and introducing small improvements in performance. AMD came out with the first Zen-based CPU-s in 2017, followed soon by APU-s that were used in laptop designs as well. At that point AMD had almost closed the performance gap in the laptop space, forcing Intel to up their core counts and the turbo boost power limits as well. With the introduction of Ryzen 4000 and 5000 series, AMD went above and beyond and managed to bring great CPU and GPU performance to the laptop space, with the one notable omission being Thunderbolt 3 support.

Then Apple came out of nowhere and provided heavy competition for both companies. The new Apple M1 chips are fast as hell for what they really are and consume so little power that the fans in the laptop rarely have to turn on. You also get the benefit of having an amazing battery life. That same design has also found use in the desktop lineup in the form of the new iMac, and who knows, maybe it will also end up in a proper workstation machine at some point?

While I don’t like the software stack that MacBooks ship with, and the poor repairability is a major downside for me, I still have to admit that Apple is moving in the right direction with their chip designs. Performing well without your laptop turning into a poor man’s version of a jet engine is exactly what we should be striving for.

Closing thoughts

I understand that the reason we often end up using the latest and greatest hardware is a result from the need to perform our work as fast as possible, because you’ll end up using less time, and less time spent on a task should result in more productivity. However, this has to be more sustainable, and continuing the trend that the “big boys” are going in goes against that.

I hope that we will see more progress in this area, especially from companies other than Apple.

Subscribe to new posts via the RSS feed.

Not sure what RSS is, or how to get started? Check this guide!

You can reach me via e-mail or LinkedIn.

If you liked this post, consider sharing it!